Incident response is always a cat and mouse game. Organizations spend heavily on people and technology to help protect their enterprise, while threat actors continue to find new and unique ways to bypass those controls. We’ve seen this trend continue over time, whether it be with the shift to MHTML files by Locky or the delivery of malicious PowerPoint show files. The PhishMe intelligence team has noticed another change, this one by the actors who are phishing for login credentials, and their tactics reveal that they are actively working to bypass security controls.

Many technologies will inspect attachments for URLs to determine if they’re malicious in nature. If a malicious URL is observed, those emails are discarded. One way to avoid delivering a URL is to deliver the phishing form as a local file—by attaching an HTML page to the html phishing email. When the victim loads the form in their browser by double-clicking the attachment, most of the components that are needed to create the look and feel of the form are contained in the attachment, though sometimes certain images are loaded from the web and even from the spoofed brand’s legitimate site.

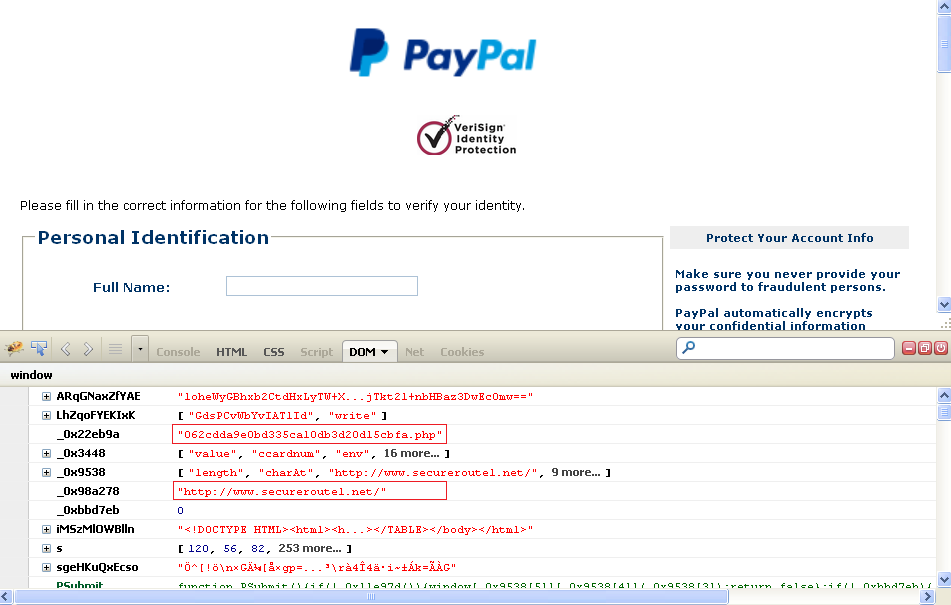

In order to further research the online footprint of the phishing attack, one needs to examine the source code of the HTML attachment to determine whether it refers to any online content and to determine the value of the Action attribute of the HTML form. That value, known as the “POST script” or “Action URL,” is typically a PHP script hosted on a compromised server. In most cases, identification of the Action URL is not difficult and can be easily automated by investigators; however, some threat actors are now obfuscating the code of the attachments so deeply that a special tool is needed to manually spot the Action URL. The Firefox plugin Firebug can be used to view the Action URL components in plain text in the DOM (document object model) tab of the Firebug console, without needing to reverse-engineer the entire source code of the attachment or complete the form in a sandbox.

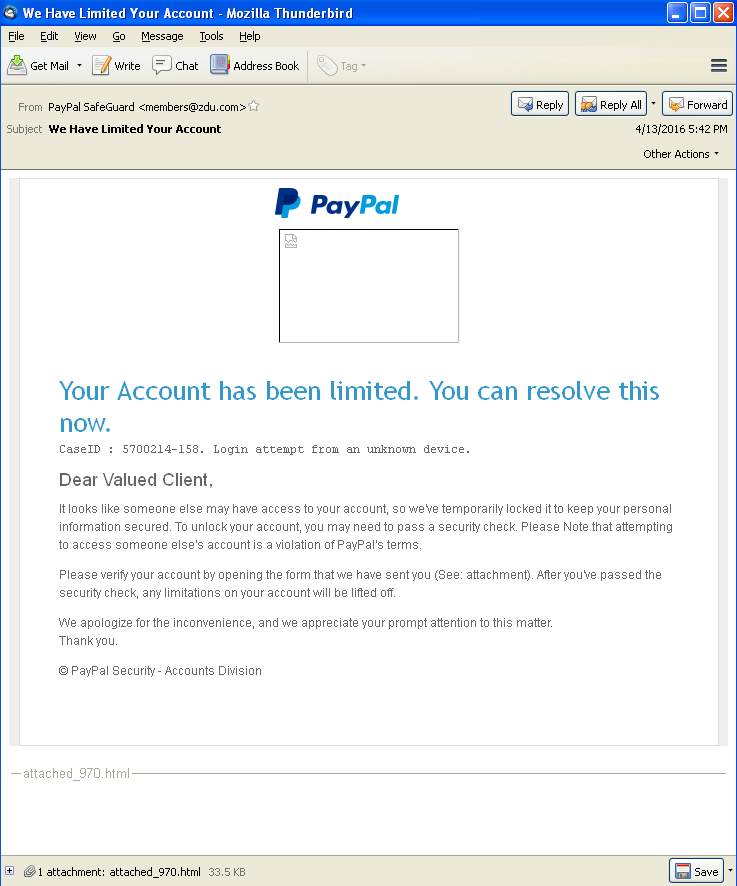

The phish in question target PayPal customers and are delivered as HTML files attached to spam messages like the one seen in Figure 1 below.

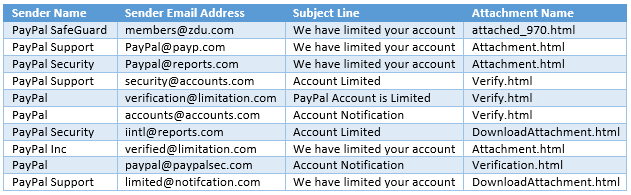

Table 1 below summarizes a few details about some of the related spam messages that PhishMe has recorded recently.

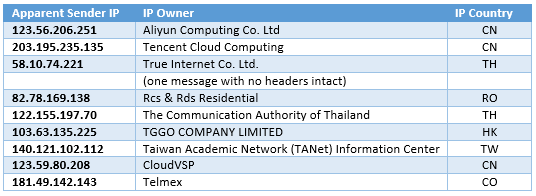

Table 2 below summarizes details gleaned from the corresponding headers of the spam messages that were examined. From Table 2 we can discover that none of the messages were sent from the same location.

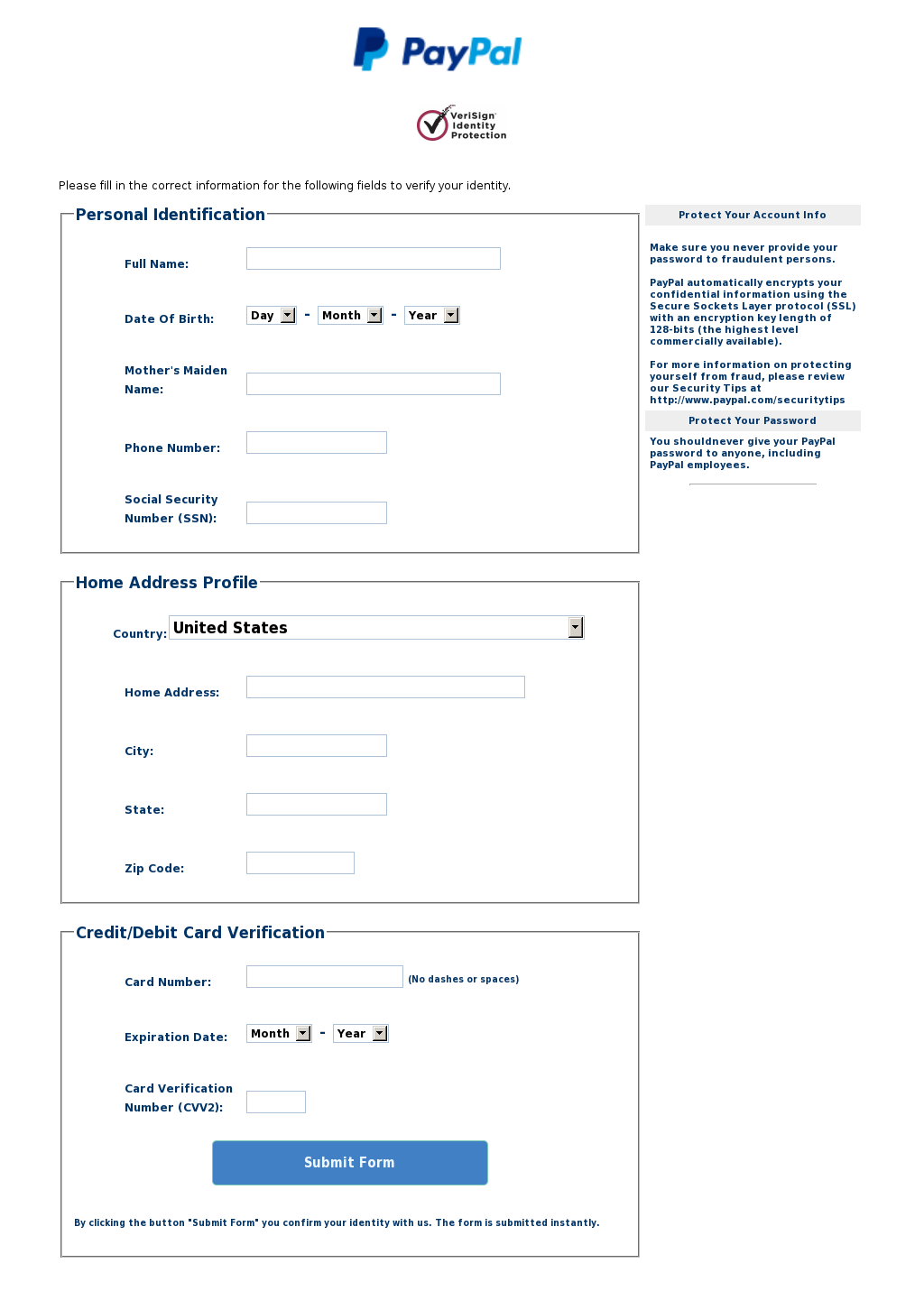

Once the victim completes the form—and supplies their name, date of birth, mother’s maiden name, phone number, Social Security number, home address, and credit card number, expiration and CVV—clicking “Submit Form” actually submits all of those details to a criminal. The submission happens per the instructions contained in PHP code found on domain names registered by fraudsters. A sample of the form can be seen in Figure 2 below. The PayPal logo gets fetched from the real PayPal website, and the VeriSign logo for most of the samples reviewed loads from a Polish website, b2bonline.pl.

Some of these obfuscated attachments were mentioned by the Twitter account @techhelplist, on March 14th, 16th, and 22nd, and April 25th, and, as hinted at in the screenshots tweeted, a researcher can easily view the de-obfuscation using Firebug. In the DOM tab of Firebug, we can even see the name of the Action script, as in the red boxes in Figure 3 below. When victims submit their form details, they are re-directed to the legitimate PayPal site by this script, a step used to convince the victim that nothing is amiss.

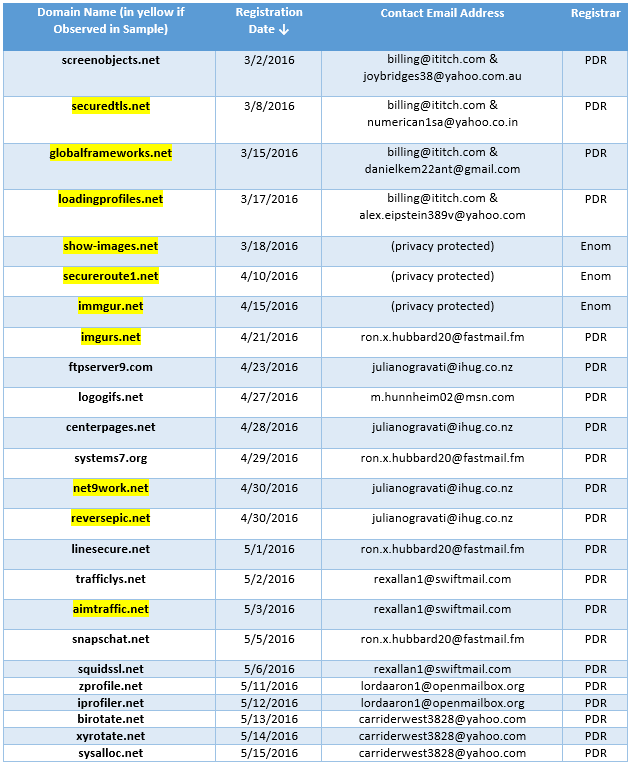

PhishMe Intelligence has recorded dozens of examples of these attachments, starting on March 9th and continuing through mid-May. Table 3 below includes registration details for the ten fraud domains that have been observed in samples as hosts for the PHP scripts that handle the stolen PII, and three of them were registered using the same Admin contact email address, billing@ititch.com. Those three were securedtls.net, registered March 8th; globalframeworks.net, registered on March 15th; and loadingprofiles.net, registered March 17th. The same email address is also associated with the registration of many other domain names since February 24th, most of which are either lookalike names of well-known services or named to reflect fraudulent activity; the complete list of domain names related to [email protected] is available here. In that list, all of the names were registered since February 20th, and the only registrar noted, among all 154 domain names, is PDR, Ltd. dba PublicDomainRegistry.com. IT Itch, a business in New Zealand, provides “privacy protected web solutions with bitcoin payment by default,” according to its website. The registrant email addresses associated with the three domains facilitated by IT Itch are [email protected], [email protected], and [email protected], none of which are recognized as valid addresses by the respective email service providers.

The domain name imgurs.net was also registered on April 21st with PDR, using the email address [email protected], an email address that was also used to register the following names with PDR: systems7.org on April 29th, linesecure.net on May 1st, and snapschat.net on May 5th.

The email address [email protected] was used with PDR on April 30th and May 7th to register the two observed domains net9work.net and reversepic.net, respectively, as well as two additional domain names ftpserver9.com and centerpages.net, registered on April 23rd and 28th, respectively. Not surprisingly, the mail server for ihug.co.nz does not recognize the account for “julianogravati.”

The domains show-images.net, secureroute1.net, and immgur.net were registered with Enom using privacy protection on March 18th, April 10th, and April 15th, respectively.

Finally, the domain aimtraffic.net was registered with PDR on May 3rd by [email protected], an email address also used to register the names trafficlys.net on May 2nd and squidssl.net on May 6th.

Also related may be another domain that @techhelplist tweeted about with respect to an obfuscated PayPal phish: logogifs.net, registered with PDR on April 27th by [email protected], an address not recognized by Microsoft. The name resolved to the same IP address as several other related domain names, in the same time frame.

Examining passive DNS records helps to identify additional, related names hosted on the same IP addresses in the same time frame: screenobjects.net, zprofile.net, iprofiler.net, birotate.net, xyrotate.net, and sysalloc.net—all exhibiting the same registration pattern. In all, ten of the domains shown in the summary table below were utilizing CloudFlare services, and the services for most of those had already been terminated when we contacted CloudFlare’s Head of Trust and Safety. Examining the true hosts of the phishing content, however, revealed the following details:

- Twelve hosts resolved to the Novogara IP 94.102.49.33 (Quasi Networks–Seychelles).

- Six of the hosts resolved to the German ASN Netzbetrieb’s IP address 81.95.13.41, in a small net block assigned to “Sina Nasiri” of Dubai.

- Three hosts resolved to 188.68.235.69, assigned to “Jacek Politowski” on the Sprint network in Poland.

Summary

The spam messages do not appear to have been sent from the same servers that hosted the fraud domains, and the HTML attachments were clearly structured to avoid discovery of the locations of the exfiltration scripts. Because not all anti-phishing solutions are recognizing the hidden action scripts, we are forced to rely more heavily on our smartest computer—the human, who can be taught to recognize that an online service provider such as PayPal would not email you a form from a generic address, request that you divulge numerous data points about yourself, and then transmit your details after you hit Submit on a local file.

Further, these spam and phishing page examples show that phishers continue to hone their skills of avoiding detection by applying multiple levels of complexity to their processes and identifying domain registration resources where they fly under the radar and hosting providers that may not have a system in place to recognize this type of abuse.

Reference

hxxps://twitter.com/Techhelplistcom/status/709406997532160000

hxxps://twitter.com/Techhelplistcom/status/710191257809608704

hxxps://twitter.com/Techhelplistcom/status/712398368387891200

hxxps://twitter.com/techhelplistcom/status/724562071967256576

hxxps://twitter.com/Techhelplistcom/status/725681196177313793